JVM GC Terminology

The terminology used to describe garbage collection algorithms can be confusing. In this section, we will explain some commonly used terms and their meanings.

- Live set – The live set refers to the number of live objects in a heap. The size of the live set has a significant impact on the amount of work required for garbage collection, as well as the duration of GC pause times. However, modern garbage collectors are often able to optimize the live set size separately from the GC pause times.

-

Heap size - The heap size refers to the total amount of memory that is allocated to the heap. The performance of a garbage collector can be influenced by the size of the heap. The garbage collection algorithm chosen is responsible for cleaning the entire heap or a subset of the heap. The size of the heap can have a direct impact on garbage collection and stop-the-world pause times. In general, larger heaps tend to have longer stop-the-world pause times, especially with older GC algorithms. However, modern garbage collectors use innovative methods to reduce the linear correlation between heap size and stop-the-world pause times.

-

Heap Fragmentation and Compaction - Memory allocation and deallocation can create gaps in memory, which are known as heap fragmentation. If fragmentation is not resolved, the heap can have plenty of space but be unable to allocate memory for new objects because of a lack of contiguous free space. To solve fragmentation, compaction is used to relocate live objects so that the heap has contiguous free space.

-

Mutation/Mutating Thread/Application Threads - In GC literature, "mutating threads" refers to application threads that change object references. These threads modify the object graph and are also known as application threads. The mutation rate is the rate at which references are updated in memory.

-

Stop-the-World - A stop-the-world pause is a complete halt of all application threads to perform a GC cycle. Some GC algorithms require stop-the-world pauses to garbage collect without interference from the application code. During a stop-the-world pause, the heap is unmodified by the application while GC is in progress.

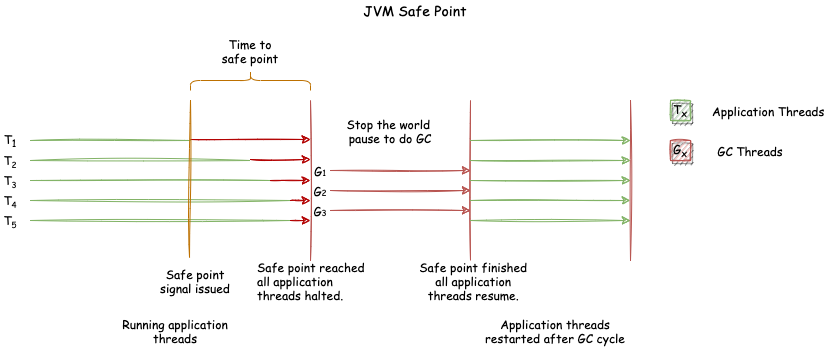

- Safe Point - A safe point is a mechanism that brings application threads to a well-defined point regarding their interaction with the heap. Threads are no longer executing application code, enabling the JVM to perform work that would be difficult to do while threads are running and mutating the heap. The GC implementation signals threads to pause by issuing a safe point call. A safe point provides the GC algorithm with a slot to safely perform GC. Application threads perform regular safe point checks to determine if they should pause. Once a safe point call is issued, all application threads must block at a safe point. Safe point checking is done via polling, where every method regularly checks a particular memory location to determine if a safe point has been issued. The JVM injects safe point checks into every method, usually performed just before a method returns. At a safe point, all heap objects are in a consistent state, and all GC roots are known. Once all application threads have blocked at a safe point, GC can be performed. Safe points are used in several situations, including GC pauses, code deoptimization, flushing code cache, hot swapping of classes, and various debugging operations. The "Safe Point" diagram illustrates the safe point mechanism in a JVM. Running application threads are signalled to come to a safe point. However, it should be noted that application threads can take varying amounts of time to halt. Once all application threads are halted, GC takes place. Once GC has finished, the safe point is removed, and all application threads can continue.

|

|

|---|

| Safe Point |

- Time to Safe Point - The time taken for all application threads to come to a stop is known as Time To Safe Point (TTSP). It is the difference between when the safe point call is issued and when all application threads come to a halt.

- Moving Collectors - Moving collectors move objects into new areas and update all references to an object. This happens after a GC cycle. A non-moving collector, on the other hand, leaves all live objects in the same memory location. This often leads to memory fragmentation. There are two types of moving collectors:

- Evacuating/Copying Collector - An evacuating collector is a type of moving collector. An evacuating GC is one where the memory region collected is left completely empty. All live objects are moved to another memory region. Evacuating collectors perform well since there is no memory compaction overhead. They naturally defragment memory.

- Compacting Collector - A compacting collector is also a type of moving collector. The main goal of a compacting collector is to eliminate memory fragmentation. A compacting GC eliminates memory fragmentation by ensuring that all allocated memory is arranged in a single contiguous region at the end of a GC cycle.

- Incremental Collector - Incremental GC performs a single GC cycle in phases. Incremental GCs interleave a GC cycle in favor of application progress. Incremental GCs run for a specified period doing part of the GC work. Incremental collectors may pause due to various conditions. The collector could terminate due to numerous reasons such as a time budget or a higher priority GC phase that needs to be carried out. Incremental collectors would have done some productive work in the allocated time. This is in contrast to a phase that needs to be fully completed for it to have been productive.

- Serial vs Parallel vs Concurrent Collectors - It is important to understand the difference between Serial, Parallel, and Concurrent collectors to appreciate various GC algorithms. These three concepts are essential and must be internalized.

-

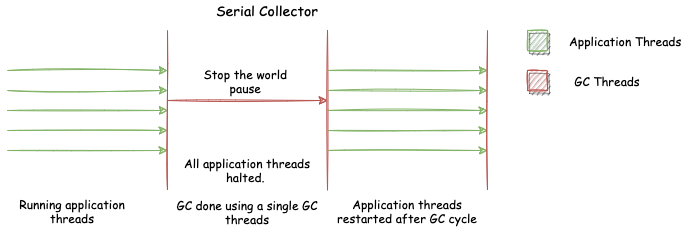

Serial collector - The serial collector is a type of garbage collector that uses a single thread to perform all GC phases. During a GC cycle, application threads are paused. The serial collector is simple and efficient for small-scale applications or systems with limited resources, but it may not be suitable for larger applications due to the long pauses it can cause.

-

|

|

|---|

| Serial Collector |

-

-

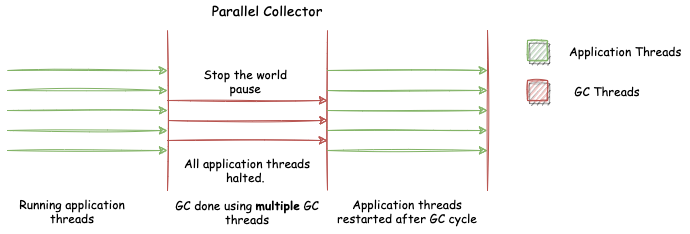

Parallel collectors - Parallel collectors use multiple GC threads to perform various phases of a GC cycle. Unlike serial collectors, parallel collectors operate using multiple threads for garbage collection. However, like serial collectors, parallel collectors pause application threads during GC cycles. The number of threads used by parallel collectors is typically configurable using an application argument.

Parallel Collector -

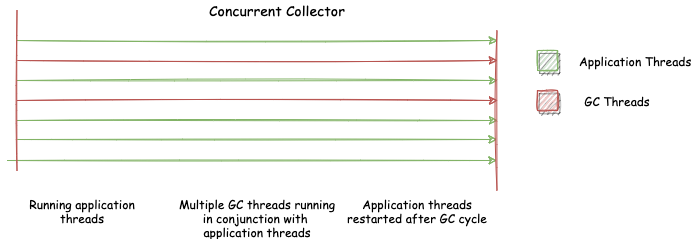

Concurrent collectors - Concurrent collectors work in conjunction with the application, unlike parallel collectors. GC work is carried out while application threads are running, allowing the application to continue running during garbage collection. Concurrent collectors may or may not use multiple GC threads to perform their work, and the number of threads used can vary depending on the GC cycle phase. The number of threads used can also be adjusted through application arguments, similar to parallel collectors.

Concurrent Collector

-

-

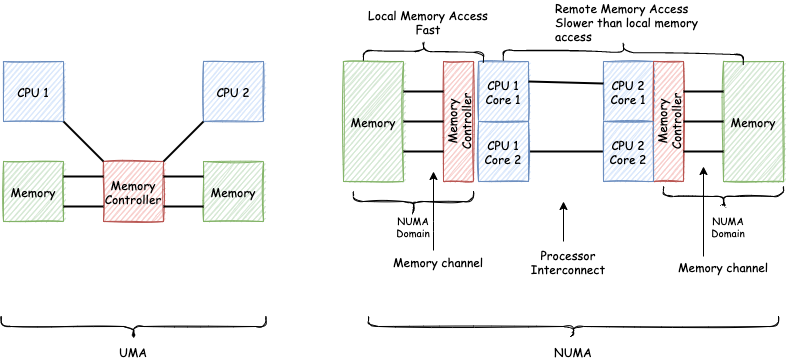

NUMA-aware - Modern multi-socket machines increasingly implement a Non-Uniform Memory Access (NUMA) architecture, which is in stark contrast to previous architectures that implemented Uniform Memory Access (UMA). In a NUMA architecture, memory is divided into separate regions called NUMA domains, each consisting of a set of CPUs/cores and its locally connected memory. Memory access latency is dependent on the memory location relative to the processor, as processors can access their own local NUMA domain faster than a remote one.

Garbage collectors that are aware of NUMA architectures provide memory placement that takes memory location into account, and are called NUMA-aware GCs. These collectors ensure that frequently used memory is locally located, while rarely used memory is remotely located. The diagram above illustrates the difference between UMA and NUMA architectures.

NUMA vs UMA

UMA vs NUMA -

GC Invariant - The term "invariant" is commonly used when describing GC algorithms. However, it can be confusing for those who are not familiar with the concept. In computer science, an invariant is a condition that must be satisfied before a procedure or operation can be executed, and it must continue to hold true after the operation has been completed. GC invariants ensure that the program is in a particular state both before and after a GC operation, which helps to minimize any side effects that may occur as a result of the operation.

No comments yet.